If you’re running a marketing agency today, you’re likely navigating a maze with obstacles that’s different and more intricate than ever. Sure, you have unprecedented access to more customer data than you know what to do with. But you’re also juggling changing algorithms, deciphering new data privacy laws, keeping clients happy, and creating content for an audience with the attention span of a goldfish on espresso.

Staying on top of all these responsibilities while keeping in touch with industry trends that seem to change daily isn’t for the faint of heart. To do so successfully requires more than a knack for creativity or an eye for numbers. Your agency’s success requires a blend of both, guided by insights that are timely and timeless.

To help you through that maze, we created the “Must-Have Expert Insights for Agencies in 2024” ebook. It is a distillation of the top 10 insights learned from experts at the 2023 Agency Summit. These skilled professionals have found a way out of the maze and emerged with indispensable lessons that will help your agency continue to grow and scale successfully. Whether you need advice about harnessing the beast that is AI or understanding and proving social media ROI—consider this your guide to not just surviving but thriving in the agency world of tomorrow.

Here’s a look at two of the marketing tips for agencies in the ebook. For the full version, download your FREE copy right away.

Insight No.1: AI won’t take your job. But someone who knows how to use AI will.

Source: Christopher Penn, It’s The End of Your Agency As You Know It

During the Agency Summit, we talked to Christopher Penn, co-founder and chief data scientist at Trustinsights.ai. Penn shared the details of how AI is being used now, how it will be used in the future, and what it means for marketing agencies.

Here are the key takeaways Penn shared:

The writing’s on the wall. AI isn’t just a buzzword—it’s a seismic shift in how businesses operate. From automating routine tasks to data analytics and customer engagement, AI is becoming the backbone of innovation.

Gartner predicts that by 2025, organizations that use AI across the marketing function will shift 75% of their staff’s operations from production to more strategic activities.

For those not in the tech loop, the fear is real: “Will a machine take my job?”

The answer is nuanced. It’s true that AI will (and has already) changed the job market significantly. But while AI will displace certain roles, it will also create new ones that we can’t yet envision.

More likely than not, workers who are skilled at AI will take the job of the workers who are not. The key here is going to be adaptability and flexibility. Marketers will need to upskill and reskill to remain competitive in the job market.

Learning the basics of AI, data science, or even how to effectively integrate AI tools into your workflow can make you irreplaceable. By no means do you need to become a full-fledged data scientist overnight. But understanding how to collaborate with these new technologies will keep you ahead of those who don’t.

Sign up for a FREE trial for Agorapulse right now!

Reskilling is equally crucial. If your job is highly susceptible to automation, diversifying your skill set can provide a safety net. Marketing professionals, for instance, are now expected to be familiar with data analytics tools and customer relationship management software that employ AI algorithms.

This means understanding and learning effective prompt engineering can’t be ignored.

Insight No. 2: Learn prompt engineering now or risk being left behind.

Source: Christopher Penn, It’s The End of Your Agency As You Know It

Using AI tools like ChatGPT or Bard in marketing operations will reduce friction and eliminate redundancy. They allow marketers to shift their budgets and resources to activities that support a more dynamic marketing organization.

Agencies should start now by ensuring that employees are trained on not only prompt engineering but other use cases for AI, including automating routine tasks, call transcription, writing code, etc.

If you want to learn prompt engineering, it is crucial that you understand just how AI tools that are based on LLMs (large language models) work.

Here is a quick primer from Penn’s webinar:

First of all,what are large language models? It all begins with a phrase from John Rupert Firth in 1957, when he said, “You shall know a word by the company it keeps.” This is the basis on which all large language models work.

So what does that mean exactly?

At their core, AI language models like GPT-4 are massive neural networks trained on a large dataset of text. They’re essentially pattern recognizers that use statistical probabilities to predict the next word in a sequence, based on the words that came before it.

Training involves feeding the model tons of data and adjusting internal parameters, so that it learns to make accurate predictions. During this phase, the model is basically trying to minimize its error rate, adapting its internal “knowledge” to do better next time.

Once trained, the model can generate text based on a given prompt. It uses what it learned during training to predict what words should come next, effectively “completing” the prompt in a way that mimics human-like language.

However, these models aren’t conscious and they don’t understand context or possess any sort of awareness. They’re just exceptionally good at recognizing patterns in data. So, when you’re engineering a prompt, you’re essentially framing a question in a way that aligns with patterns the model has seen in its training data.

GPT-4 and similar models are probabilistic, not deterministic. This means that they give you what they “think” is the most likely next word or phrase. But it’s up to you to guide them toward the answers or outcomes you actually find useful.

“The key takeaway from understanding prompt engineering is that the more relevant words you use in the prompt, the better your prompts will perform, and the better your results will be.” (Christopher Penn)

The prompt is crucial to getting a good result as it sets the stage for the model’s output. It’s like giving someone a topic for improv: The clearer and more specific you are, the closer the response will align with your expectations.

Guiding a language model toward useful outcomes is easier if you follow some simple rules when creating your prompt:

- Precision. Consider the prompt your way of setting the boundaries or parameters within which the model operates. A vague prompt might get you an answer that’s technically correct but not really what you’re looking for. So, better to be precise and craft your prompt with specific language. Instead of asking, “Tell me about marketing,” you could ask, “What are innovative strategies for improving customer retention in e-commerce?”

- Context. Give enough background information. The model doesn’t know what it doesn’t know, so a bit of framing helps. For example, you’ll want to provide the end goal of your request, who the intended audience is, the format, the tone, and whether there are any limitations like a specific word count, for example.

- Constraints. Limit the scope of the question. If you ask for “ways to improve email marketing,” you’ll get a broad range of answers. But if you ask for “three ways to improve email marketing campaign open rates for a social media marketing agency,” you’re likely to get a more focused response.

- Iteration. If the first answer isn’t perfect, refine your prompt and ask again. Think of it as a conversation where you nudge the model toward the answer you want.

- Multiple prompts. Sometimes, asking the same question in different ways can help. Doing so can give you a broader range of answers to choose from or highlight different perspectives of the same issue.

- Direct commands. You can instruct the model to think step by step or debate pros and cons before it settles on an answer. Commands like “Give a detailed explanation” or “Summarize the key points” can steer the output as well.For example, if I get an answer that is too basic or general, I will tell ChatGPT that. So I respond with, “This feels pretty generic and basic. I know that you are capable of writing at a much higher level than this.” And then it usually will reply with something like, “You’re right – thanks for the nudge.” and then go on to provide more information that is more in-depth and complex.

- Feedback loop. Take what the model gives you, refine it, and feed it back into the model. This process can help you get more nuanced or complex answers.

It’s definitely not an exact science, and I’d say it’s more of an art form—one you can get better at with practice.

Sometimes, the responses you get from ChatGPT will surprise you. It will give insights or a perspective that you hadn’t even considered—so it’s worth playing around with.

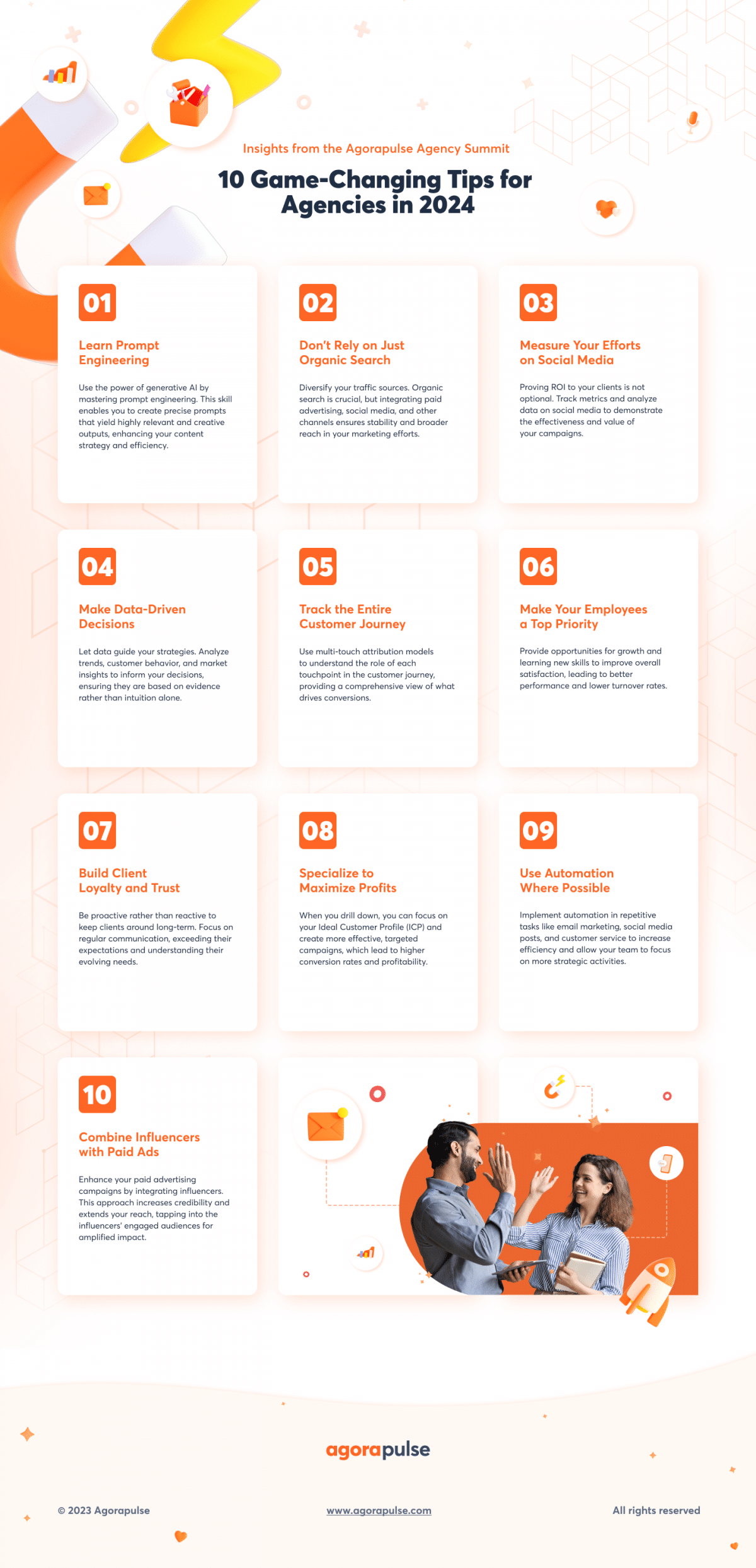

Marketing Tips infographic

To get more insights, download your free copy of “Must-Have Expert Insights for Agencies” right away.

![Game-Changing Insights for Agencies in 2024 [Free Ebook]](https://static1.agorapulse.com/blog/wp-content/uploads/sites/2/2023/12/Have-Agency-Insights-for-2024-Pinterest-scaled.jpg)